1. I. Introduction

perform exploratory statistics evaluation and carried out research. The engineering group are regularly uncertain what to do inside the meanwhile, developing the "pull of the waterfall," wherein supposedly agile tasks tackle characteristics of the waterfall. Agile records technology bridges this gap among the 2 groups, developing a more powerful alignment of their efforts.

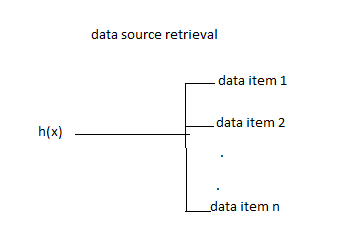

This e book is likewise approximately "massive statistics." Agile facts science is a improvement technique that copes with the unpredictable realities of making analytics packages from information at scale. It's miles a theoretical and technical guide for operating a Spark records refinery to harness the energy of the "huge records" in your employer. Warehouse-scale computing has given us significant storage and compute resources to clear up new kinds of troubles concerning storing and processing extraordinary quantities of information. There may be tremendous hobby in bringing new gear to endure on previously intractable problems, allowing us to derive totally new products from raw statistics, to refine raw records into profitable insights, and to productize and productionize insights in new kinds of analytics applications. These gears are processor cores and disk spindles, paired with visualization, information, and gadget learning. That is records technological know-how.

On the same time, during the last twenty years, the sector huge net has emerged because the dominant medium for statistics trade. For the duration of this time, software engineering has been converted through the "agile" revolution in how programs are conceived, constructed, and maintained. Those new procedures convey in more projects and merchandise on time and under budget, and permit small groups or single actors to develop whole applications spanning extensive domain names. That is agile software development.

However there's a problem. working with real data inside the wild, doing facts science, and acting serious research takes time-longer than an agile cycle (at the order of months). It takes extra time than is to be had in many businesses for an undertaking sprint, which means today's carried out researcher is more than pressed for time. Statistics technological know-how is stuck within the antique-faculty software program agenda referred to as the waterfall technique.

Our hassle and our opportunity come at the intersection of those two developments: how can we contain facts technological know-how, that's applied studies and requires exhaustive effort on an unpredictable timeline, into the agile application? How can analytics applications do better than the waterfall technique that we've long because left at the back of? How can we craft programs for unknown, evolving facts models? How can we broaden new agile techniques to suit the records science procedure to create terrific products?

This eBook tries to synthesize two fields, agile development and data technology on large datasets; to meld research and engineering into a efficient relationship. To acquire this, it gives a brand new agile method and examples of constructing merchandise with an appropriate software program stack. The methodology is designed to maximize the advent of software capabilities primarily based at the most penetrating insights. The software stack is a light-weight toolset which could deal with the uncertain, shifting sea of uncooked information and promises enough productivity to allow the agile system to be successful. The book is going on to expose you a way to iteratively build fee using this stack, to get returned to agility and mine facts to turn it into bucks.

Agile information technology objectives to put you again inside the driving force's seat, making sure that you're carried out research produces useful products that meet the desires of real users.

2. II. Definition

What is Agile information technological knowhow (ads)? in this bankruptcy I define a new method for analytics product development, something I hinted at inside the first version but did now not specific in detail.

To begin, what is the purpose of the commercials procedure?

3. III. Methodology as Tweet

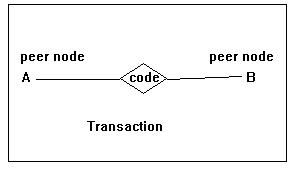

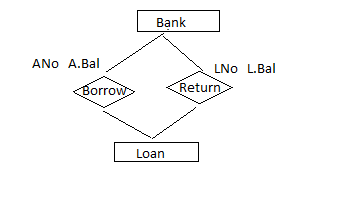

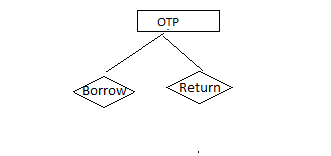

The intention of the Agile statistics technology procedure is to record, facilitate, and manual exploratory statistics evaluation to discover and comply with the essential path to a compelling analytics product (parent 1-1. Agile data technology "goes meta" and places the lens on the exploratory information evaluation technique, to file insight because it happens. This turns into the primary pastime of product development. by means of "going meta," we make the technique awareness on something that is predictable, that can be managed, in preference to the product output itself, which can't.

4. IV. Agile Data Science Manifesto

Agile facts technological know-how is prepared round the subsequent principles:

? Iterate, iterate, and iterate: tables, charts, reports, predictions.

? Deliver intermediate output. Even failed experiments have output.

? Prototype experiments over implementing tasks.

? Combine the tyrannical opinion of records in product management. ? Climb up and down the statistics-fee pyramid as we paintings.

? Discover and pursue the important course to a killer product. ? Get Meta. Describe the procedure, not just the quit country.

Perception comes from the twenty-5th question in a chain of queries, now not the first one. Information tables need to be parsed, formatted, sorted, aggregated, and summarized before they can be understood. Insightful charts typically come from the 0.33 or fourth attempt, now not the primary. Building correct predictive models can take much iteration of feature engineering and hyperparameter tuning. In statistics technological know-how, generation is the essential element to the extraction, visualization, and productization of perception. While we build, we iterate.

5. V. Ship Intermediate Output

New release is the vital act in crafting analytics programs, this means that we're often left on the end of a dash with matters that aren't entire. If we didn't deliver incomplete or intermediate output via the stop of a dash, we'd frequently become delivery not anything at all. And that isn't agile; I call it the "death loop," where infinite time may be wasted perfecting matters no one desires.

Precise structures are self-documenting, and in Agile statistics technology we document and share the incomplete belongings we create as we work. We commit all work to source control. We proportion this work with teammates and, as soon as possible, with end users. This principle isn't obvious to anyone. Many statistics scientists come from instructional backgrounds, in which years of excessive research effort went into a unmarried huge paper called a thesis that led to a sophisticated degree.

6. VI. Prototype Experiments Over Enforcing Obligations

In software program engineering, a product manager assigns a chart to a developer to enforce throughout a dash. The developer interprets the assignment right into a square institution by and creates an internet web page for it. Task accomplished? Wrong. Charts which can be designated this manner are not going to have cost. technology team approach overseeing more than one concurrent experiments more than it approach handing out obligations. Suitable belongings (tables, charts, reviews, predictions) turn out to be artifacts of exploratory records analysis, so we should think more in terms of experiments than responsibilities.

7. VII. Combine the Tyrannical Opinion of Facts

What's possible is as crucial as what is supposed. What is simple and what is difficult are as important matters to understand as what is favored. In software utility improvement there are three views to don't forget: those of the clients, the developers, and the commercial enterprise. In analytics application development there's every other attitude: that of the statistics. Without knowledge what the facts "has to mention" about any function, the product owner can't do a very good process. The information's opinion should always be blanketed in product discussions, which means that that they have to be grounded in visualization through exploratory records analysis inside the inner utility that turns into the focus of our efforts.

8. VIII. Climb up and down the Facts-fee Pyramid

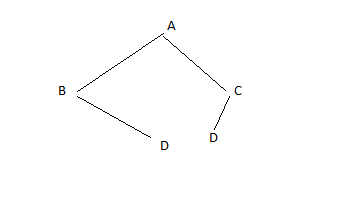

The information-value pyramid (discern 1-2) is a five-degree pyramid modeled after Maslow's hierarchy of needs. It expresses the increasing quantity of price created when refining raw statistics into tables and charts, followed by means of reports, then predictions, all of that's supposed to allow new actions or enhance existing ones:

? The first degree of the facts-fee pyramid (facts) is about plumbing; creating a dataset go with the flow from where it's miles amassed to in which it appears in an utility. ? The charts and tables layer is the level in which refinement and evaluation starts off evolved. ? The reports layer enables immersive exploration of facts, in which we can sincerely motive about it and get to comprehend it. ? The predictions layer is in which more fee is created, however growing top predictions method characteristic engineering, which the decrease degrees embody and facilitate.

? The final level, actions, is in which the AI (artificial intelligence) craze is taking location. In case your perception doesn't enable a new motion or improve an existing one, it isn't very valuable. The data-cost pyramid gives structure to our paintings. The pyramid is something to maintain in mind, not a rule to be observed. On occasion you skip steps, every now and then you figure backward. If you pull a new dataset at once into a predictive model as a function, you incur technical debt in case you don't make this dataset transparent and reachable by using including it in your utility information model inside the decrease ranges. You have to preserve this in thoughts, and pay off the debt as you're able.

9. IX. Find Out and Pursue the Vital Direction to a Killer Product

To maximize our odds of fulfillment, we should awareness most of our time on that issue of our software this is most critical to its success. But which factor is that? This need to be observed through experimentation. Analytics product improvement is the search for and pursuit of a shifting aim. Year 2022

( ) C © 2022 Global JournalsOnce an intention is determined, as an instance a prediction to be made, then we should discover the critical course to its implementation and, if it proves valuable, to its improvement. Facts is refined grade by grade because it flows from assignment to undertaking. Analytics merchandise regularly require more than one tiers of refinement, the employment of good sized ETL (extract, rework, load) processes, techniques from records, information get entry to, system mastering, synthetic intelligence, and graph analytics.

The interplay of those stages can shape complicated webs of dependencies. The group chief holds this internet in his head. It's far his process to make certain that the team discovers the crucial route and then to organize the team round finishing it. A product supervisor cannot manipulate this procedure from the top down; rather, a product scientist must find out it from the lowest up.

10. X. Get Meta

If we will't without problems deliver precise product belongings on a time table akin to growing a ordinary application, what is going to we ship? If we don't deliver, we aren't agile. To remedy this problem, in Agile records science, we "get meta." The focal point is on documenting the analytics procedure in preference to the end state or product we're searching for. This shall we us be agile and ship intermediate content as we iteratively climb the information-fee pyramid to pursue the important direction to a killer product. So where does the product come from? From the palette we create by means of documenting our exploratory statistics evaluation.

11. XI. Amalgamation

These seven ideas work collectively to drive the Agile facts technology methodology. They serve to structure and file the manner of exploratory records evaluation and transform it into analytics applications. So that is the middle of the technique. However why? How did we get right here? Permit's check a waterfall challenge to understand the issues these forms of initiatives create.

12. XII. The Delinquent with the Waterfall

I should provide an explanation for and get out of the way the truth that career Explorer was the first recommender machine or certainly predictive model that I had ever built. Lots of its failure changed into due to my inexperience. My experience was in iterative and agile interactive visualization, which appeared a good match for the dreams of the venture, but simply the advice mission changed into extra difficult than have been anticipated within the prototype-because it turned out, a good deal more paintings became wished on the entity decision of activity titles than become foreseen.

On the identical time, issues with the method hired at the product concealed the real country of the product from management, who had been quite pleased with static mock-America handiest days earlier than launch. Remaining-minute integration revealed bugs within the interfaces between components that had been exposed to the consumer. A tough closing date created a crisis while the product proved unshippable with best days to move. In the end, I stayed up for the better a part of a week resubmitting Hadoop jobs every 5 minutes to debug final-minute fixes and adjustments, and the product was simply barely correct enough to exit. This grew to become out now not to count number an awful lot, as customers weren't in reality interested by the product idea. In the end, plenty of work become thrown away handiest months after launch.

The important thing problems with the mission have been to do with the waterfall method hired:

? The utility concept changed into simplest examined in consumer consciousness corporations and managerial opinions, and it did not honestly interact user interest. ? The prediction presentation turned into designed up front, with the actual version and its behavior being an afterthought. Things went something like this:

"We made a super layout! Your job is to expect the future for it."

"What is taking see you later to reliably are expecting the destiny?" "The customers don't recognize what 86% real means." Plane ? Mountain.

? Charts had been detailed via product/layout and failed to gain real insights. ? A tough deadline was laid out in a contract with a purchaser. ? Integration trying out took place at the stop of development, which induced a cut-off date crisis. ? Mock-u.s.a. without actual records were used during the assignment to provide the software to focus organizations and to management. This is all pretty standard for a waterfall mission. The end result changed into that management concept the product turned into on target with only weeks to move whilst integration subsequently found out problems. Word that Scrum became used in the course of the venture; however the give up product become in no way able to be examined with cease customers, consequently negating the complete factor of the agile technique hired. To sum it up, the plane hit the mountain. By contrast, there has been some other ( )

13. C

© 2022 Global Journals assignment at LinkedIn called In Maps that I led development on and product managed. It proceeded a good deal more easily due to the fact we iteratively posted the utility the usage of real records, exposing the "broken" nation of the software to inner customers and getting remarks across many release cycles. It was the evaluation among those tasks that helped formalize agile records technological know-how in my thoughts.

But if the method hired on career Explorer changed into definitely Scrum, why became it a waterfall project? It turns out that analytics products constructed through information technology teams will be predisposed to "pull" towards the waterfall. I might later discover the cause for this tendency.

14. XIII. Research as Opposed to Software Development

It seems that there is a primary war in shipping analytics products, and this is the conflict between the studies and the utility development timeline. This warfare has a tendency to make every analytics product a waterfall project, even people who set out to use a software engineering technique like Scrum.

Research, even implemented research, is technological know-how. It entails iterative experiments, in which the studying from one experiment informs the following experiment. Science excels at discovery, but it differs from engineering in that there is no specific endpoint (see figure 1-2). In other phrases: engineering is unique, and technological know-how is uncertain. Even incredibly new fields such as software program engineering, wherein estimates are frequently off through 100% or more, are greater sure than the medical technique. This is the impedance mismatch that creates the hassle.

In records science, the technological know-how element usually takes a lot longer than the engineering component, and to make matters worse, the quantity of time a given test will take is uncertain. Uncertainty in duration of time to make operating analytics assetstables, charts, and predictions-tends to purpose stand-ins for use in location of the real component. These outcomes in feedback on a mock-up using the improvement system, which aborts agility. That is a venture killer.

The answer is to get agile... but how? How do agile software program methodologies map to statistics technological know-how, and in which do they fall short?

15. XIV. The Trouble with Agile Software Program

Agile software isn't Agile data technological know-how. In this segment we'll have a look at the troubles with mapping something like Scrum without delay into the facts technological know-how technique.

16. XV. Subsequent Quality: Sponsoring Technical Obligation

Technical obligation is defined by way of Techopedia as "a idea in programming that reflects the more improvement paintings that arises whilst code that is easy to enforce in the quick run is used in place of making use of the first-rate typical answer." Understanding technical debt is crucial with regards to handling software utility improvement, because deadline stress can bring about the creation of massive amounts of technical debt. This technical debt can cripple the team's potential to hit future cut-off dates.

Technical debt is distinctive in facts technology than in software program engineering. In software engineering you retain all code, so first-class is paramount. In records technology you generally tend to discard most code, so this is much less the case. In information technology we have to take a look at in the whole lot to supply control but have to tolerate a better diploma of ugliness until something has proved useful enough to preserve and reuse. In any other case, applying software engineering requirements to records technological know-how code would reduce productiveness a splendid deal. On the equal time, a splendid deal of quality can be imparted to code through forcing a few software engineering knowledge and habits onto teachers, statisticians, researchers, and facts scientists.

In facts technological know-how, via comparison to software engineering, code shouldn't continually be excellent; it must be sooner or later desirable. Because of this a few technical debt up the front is appropriate, goodbye as it isn't always immoderate. Code that becomes critical should be able to be cleaned up with minimum effort. It doesn't have to be top at any second; however as soon as it becomes important, it ought to turn out to be top. Technical debt bureaucracy part of the internet of dependencies in dealing with an agile facts technology project. This is a fairly technical venture, necessitating technical skills within the group leader or a process that surfaces technical debt from different individuals of the group.

Prototypes are financed on technical obligation, which is paid off simplest if a prototype proves beneficial. Maximum prototypes can be discarded or minimally used, so the technical debt is never repaid. This allows an awful lot more experimentation for fewer sources. This also takes place within the form of Jupyter and Zeppelin notebooks, which vicinity the emphasis on direct expression rather than code reuse or manufacturing deployment.

17. XVI. The Twitch of the Waterfall

The heap of present day "big data" software is a whole lot greater complex than that of regular software. Additionally, there may be a very wide skillset required to construct analytics programs at scale the usage of those structures. This wide pipeline in phrases of people and era can bring about a "pull" closer to the waterfall even for groups determined to be agile.

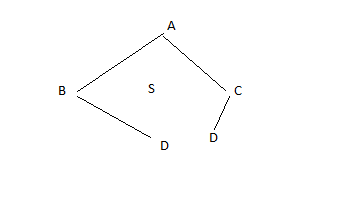

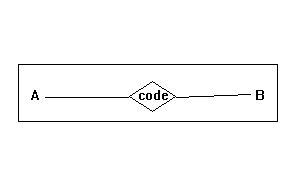

Parent 1-5 suggests that if responsibilities are completed in sprints, the thickness of the stack and group the combine to force a return to the waterfall model. On this example a chart is favored, so a records scientist uses Spark to calculate the statistics for one and places it into the database. Subsequent, an API developer creates an API for these facts, observed by means of an internet developer growing a web web page for the chart. A visualization engineer creates the real chart, which a fashion designer visually improves. Eventually, the product supervisor sees the chart and iteration is required. It takes an prolonged duration to make one leap forward. Progress could be very gradual, and the team is not agile. This exemplifies a few matters. The primary is the want for generalists who can accomplish multiple associated undertaking. But greater importantly, it indicates that it's miles vital to iterate within sprints in preference to iterating in cubicles among them. Otherwise, in case you wait a whole sprint for one team member to enforce the preceding team member's work, the method tends to become a type of stepped pyramid/waterfall.

18. XVII. The Statistics Science Technique

Having delivered the technique and described why its miles needed, now we're going to dive into the mechanics of an Agile facts science crew. We begin with putting expectations, then observe the jobs in a data technological know-how team, and sooner or later describe how the process works in exercise. While i hope this serves as an creation for readers new to records technological know-how groups or new to Agile records science, this isn't an exhaustive description of ways agile techniques work in well-known. Readers new to agile and new to information technology are advocated to consult a e-book on Scrum earlier than ingesting this chapter. Now permits communicate approximately setting expectancies of information technological knowhow groups, and how they interact with the rest of the employer.

19. XVIII. Setting Anticipations

Before we have a look at the way to compose information technology groups and run them to provide actionable insights, we first need to discuss how a records technology group fits into an organization. As the focus of data science shifts in agile information technological know-how from a pre-decided outcome to a description of the carried out studies technique, so need to the expectancies for the crew alternate. Similarly, the manner records technology teams relate to different groups is impacted.

"While can we deliver?" is the query control desires to recognize the answer to which will set expectations with the purchaser and coordinate sales, marketing, recruiting, and other efforts. With an Agile statistics science group, you don't get a directly answer to that query. There is no particular date X while prediction Y may be shippable as an internet product or API. That metric, the ship date of a predetermined artifact, is something you sacrifice while you undertake an Agile data technological know-how system. What you get in go back is proper visibility into the paintings of the crew in the direction of your enterprise dreams in the form of working software that describes in element what the team is certainly doing. With these facts in hand, other business tactics can be aligned with the actual fact of records technological know-how, in preference to the fiction of a recognized shipping date for a predetermined artifact. With a variable intention, any other query turns into just as vital: "what's going to we Year 2022 ( )

20. C

© 2022 Global Journals deliver?" or, more likely, "what will we deliver, while?" to answer those questions, any stakeholder can take a look at the utility because it exists nowadays in addition to the plans for the subsequent dash and get a feel of wherein things are and where they're moving.

With those two questions addressed, the business enterprise can paintings with a statistics technological know-how group as the artifacts of their paintings evolve into actionable insights. A facts technology group have to be tasked with discovering price to cope with a fixed of business issues. The form the output in their work takes is determined via exploratory studies. The date whilst the "final" artifacts will be ready may be estimated by careful inspection of the contemporary nation in their work. With these facts in hand, even though it is extra nuanced than a "deliver date," managers positioned around a records science crew can sync their work and schedules with the crew. In other words, we are able to't let you know precisely what we will ship, while. However in trade for accepting this truth, you get a steady, shippable progress record, in order that by using taking part inside the truth of doing facts technology you may use these records to coordinate other efforts. That is the trade-off of Agile facts technological know-how. For the reason that schedules with pre-exact artifacts and deliver dates generally include the incorrect artifacts and unrealistic dates, we sense this change-off is a great one. In reality, it is the handiest one we will make if we face the truth of doing facts technological know-how.

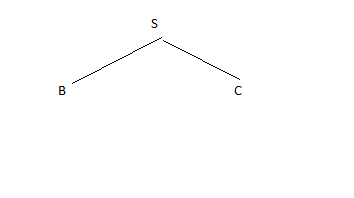

21. XIX. Data Knowledge Team Roles

Merchandise is built by groups of human beings, and agile methods focus on people over method. Data knowledge is a large area, spanning evaluation, design, development, business, and research. The roles of Agile statistics technology group participants, defined in a spectrum from patron to operations, look something like discern 1-6. the design around the facts to in shape the angle of the customer. This function is crucial, as the output of statistical fashions may be difficult to interpret by using "regular" customers who have no concept of the semantics of the version's output (i.e., how can something be 75% true?). ? Interplay designers design interactions around information fashions so users discover their cost.

? Net developers create the net programs that deliver facts to an internet browser. setup and operation of production information infrastructure. They automate deployment and take pages whilst matters pass wrong.

22. Spotting the opportunity and the problem

The vast skillset had to construct records products affords both an possibility and a trouble. If these competencies may be introduced to undergo by professionals in every position working as a team on a wealthy dataset, issues can be decomposed into components and directly attacked. Facts technological know-how is then an efficient meeting line, as illustrated in determine 1-7. But, as crew length increases to satisfy the need for know-how in those diverse areas, conversation overhead fast dominates. A researcher who is 8 folks faraway from clients is unlikely to resolve relevant issues and much more likely to remedy arcane problems. Likewise, team conferences of a dozen people are not going to be efficient. We'd break up this crew into more than one department and set up contracts of shipping among them, however then we lose both agility and concord. Ready on the output of research, we invent specifications, and shortly we discover ourselves lower back in the waterfall approach.

And yet we know that agility and a cohesive imaginative and prescient and consensus approximately a product are crucial to our fulfillment in building merchandise. The worst product-improvement hassle is one team operating on more than one imaginative and prescient. How are we to reconcile the extended span of understanding and the disjoint timelines of carried out studies, statistics technological know-how, software development, and design?

23. a) Adapting to Change

To remain agile, we ought to include and adapt to those new situations. We have to undertake adjustments in line with lean methodologies to stay productive.

Numerous modifications especially make a return to agility viable:

? Deciding on generalists over professionals ? Preferring small teams over large groups ? The use of high-degree tools and systems: cloud computing, distributed systems, and platforms as a provider (PaaS) In Agile information science, a small group of generalists makes use of scalable, excessive-stage tools and systems to iteratively refine facts into increasingly better states of price. We embody a software stack leveraging cloud computing, distributed systems, and structures as a carrier. Then we use this stack to iteratively publish the intermediate consequences of even our maximum in-depth research to snowball value from simple statistics to predictions and movements that create price and let us capture some of it to turn information into dollars. Allow's take a look at every object in element. Harnessing the energy of generalists.

In Agile information science, we value generalists over experts, as proven in figure 1-8. Design particularly is a vital position in the agile records technological know-how group. Layout does now not cease with look or experience. Design encompasses all aspects of the product, from architecture, distribution, and person experience to work environment.

| sure accurate and dependable predictions are |

| made. |

| ? Operations/DevOps engineers make sure clean |

| Year 2022 |

| 20 |

| ( ) C |

| © 2022 Global Journals |